1.1 Introduction¶

Artificial intelligence (AI) represents humanity’s attempt to create machines that can perceive, reason, learn, and act intelligently. While computers excel at computationally intensive tasks, matching human intelligence and intuition has been an aspirational goal since the inception of general-purpose computers.

The field of AI has evolved through several distinct phases:

Early Excitement (1950s-1960s): Initial optimism about machines that could reason

First AI Winter (1970s): Reality check due to limited computational power

Expert Systems Era (1980s): Rise of knowledge-based systems

Second AI Winter (late 1980s-1990s): Collapse of LISP market and funding cuts

Machine Learning Renaissance (2000s-2010s): Revival through statistical methods

Deep Learning Revolution (2010s-present): Breakthrough with neural networks and massive data

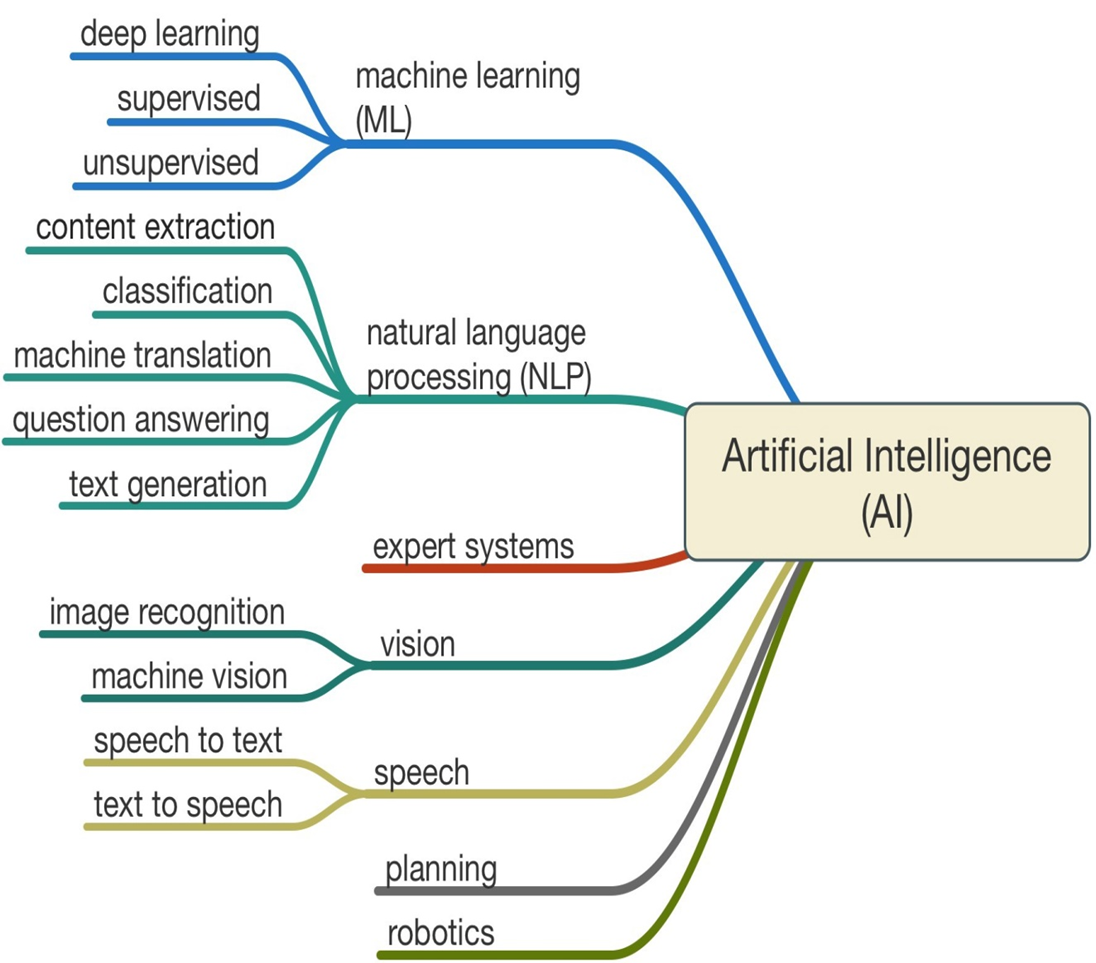

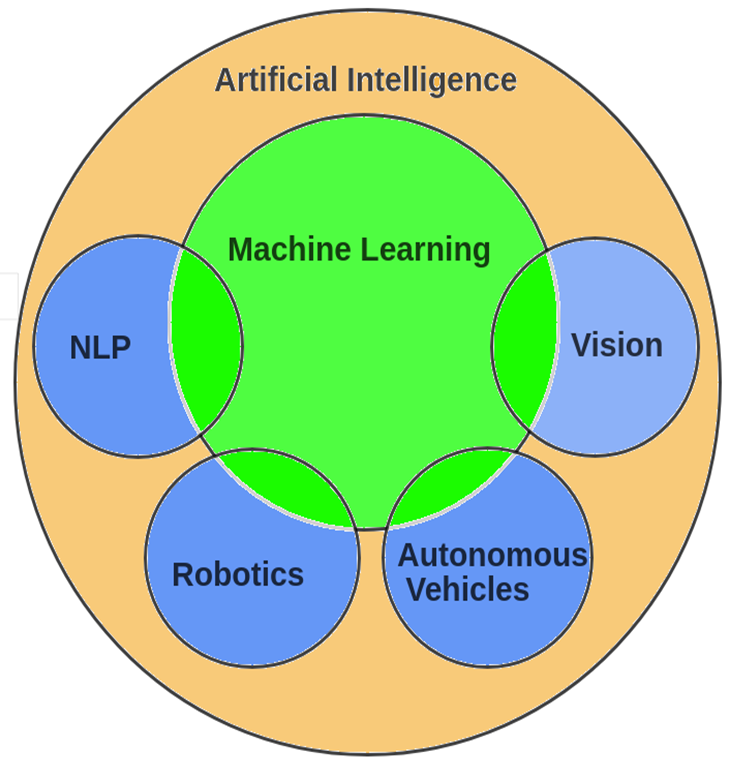

1.2 What is Artificial Intelligence?¶

A branch in computer science that is concerned with the automation of intelligent behaviors.

Such as: Speech recognition, Visual perception, Language translation...

1.3 Roots of Artificial Intelligence¶

1.4 Timeline of AI History¶

The Turing Test¶

In 1950, Alan Turing proposed a test for machine intelligence that remains influential today.

Test Procedure:

All communication is text-based

Judge asks questions to two hidden respondents

One is human, one is computer

Computer “passes” if judge cannot reliably distinguish it

Current Status:

No system has convincingly passed the full Turing Test

Modern chatbots (ChatGPT) show impressive abilities but have clear limitations

AGI remains an aspirational goal, not a present reality

Criticisms of the Turing Test:

Tests deception rather than intelligence

Focuses on linguistic ability only

Doesn’t require understanding or consciousness

May be too anthropocentric

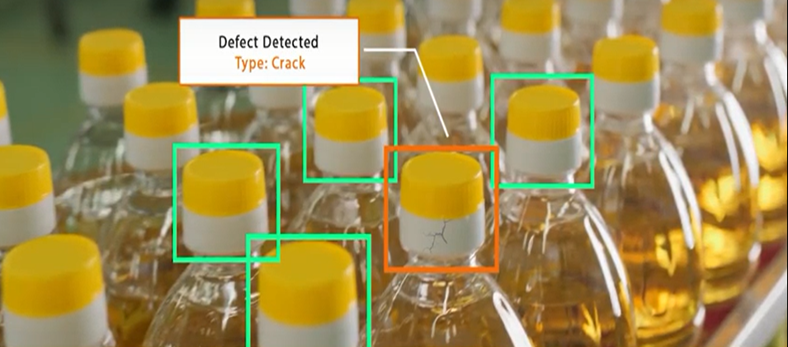

Applications of AI in Industry¶

Anomaly Detection: in processes and equipment

Optimize processes: Improve yield

Make smarter decisions and minimize risk

Predict future scenarios with neural networks

1.5 Is artificial intelligence dangerous?¶

AI can be dangerous if misused or poorly designed

Risks include:

Job displacement

Privacy concerns

Bias and discrimination

Autonomous weapons

Importance of ethical AI development and regulation